High Availability and Performance Engineering for Modern Web Systems

Study Technologies is an informational platform dedicated to understanding how modern web infrastructures remain stable, fast and reliable under real-world conditions.

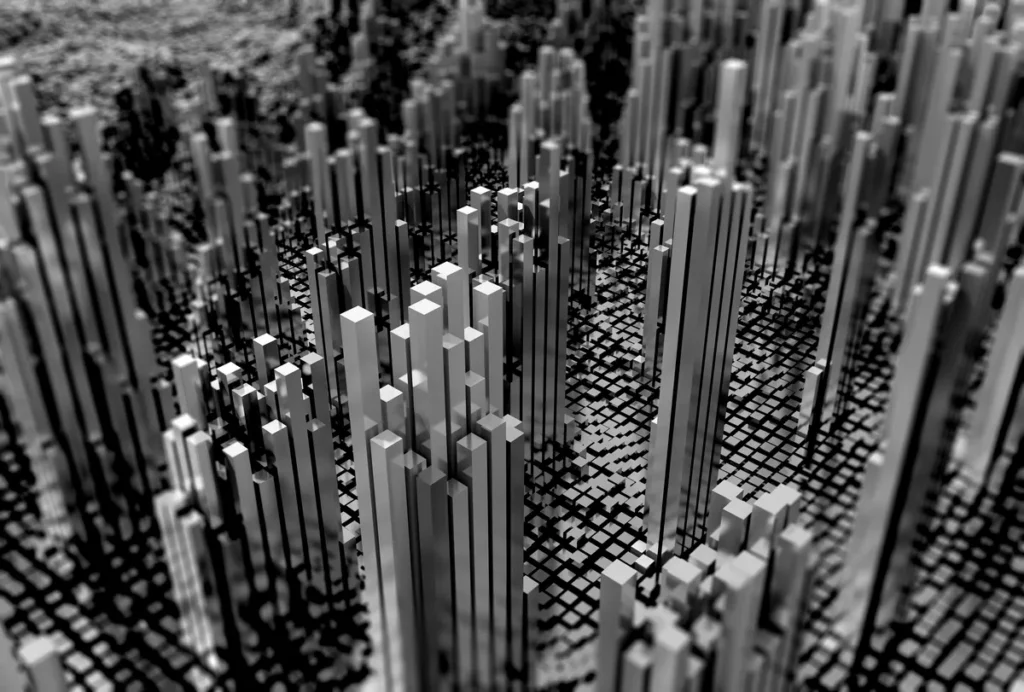

As digital services grow, traffic patterns become less predictable. Sudden spikes, global audiences, automated systems and malicious activity all create pressure on infrastructure. This site explores the technical foundations that allow platforms to maintain uptime and performance even during volatile traffic events.

High availability is not a feature. It is an architectural discipline.

At its core, high availability means designing systems that continue operating even when individual components fail. This requires eliminating single points of failure, introducing redundancy and planning for automatic failover across infrastructure layers.

The principles behind this approach are formally described in the concept of high availability architecture, which explains how redundancy, fault tolerance and failover mechanisms reduce downtime risk.

In practice, high availability includes:

Multi-zone or multi-region deployment

Database replication strategies

Load-balanced application layers

Distributed storage systems

The objective is simple: failures must be isolated, not contagious.

High Availability Foundations

Performance Under Load

Performance engineering becomes critical when systems operate under high concurrency. A platform that performs well at low traffic may degrade rapidly when requests multiply.

Performance under load depends on:

Efficient backend queries

Smart caching strategies

Controlled resource consumption

Optimized request handling

Traffic surges may be organic, such as marketing campaigns or product launches. They may also be artificial. When traffic grows faster than infrastructure capacity, latency increases and user experience degrades.

Understanding how load affects systems requires measuring response times, throughput and saturation levels. Systems should be stress-tested before growth exposes weaknesses.

Monitoring and Service Objectives

Availability and performance cannot be improved without visibility.

Monitoring frameworks track key indicators such as latency, error rates and system saturation. These metrics are often structured around service level objectives, commonly abbreviated as SLOs.

SLO methodology helps teams define acceptable thresholds and measure reliability against concrete targets. Observability tools provide the data needed to detect anomalies early, before they escalate into outages.

Early detection is particularly important in cases of traffic anomalies or infrastructure instability.

Scalability and Caching Strategies

Scalability determines how efficiently a system adapts to demand growth.

Horizontal scaling distributes traffic across multiple instances. Autoscaling policies add or remove resources dynamically. However, scaling without efficiency often increases cost without resolving core bottlenecks.

Caching plays a central role in performance optimization. Reverse proxies, edge caching and content delivery networks reduce load on origin servers by serving content closer to users. The principles behind this distributed model are well documented in the concept of a content delivery network, which explains how geographically distributed edge nodes improve performance and absorb traffic surges.

By delivering cached responses at the network edge, platforms lower latency, decrease origin load and increase resilience during peak traffic periods.

Traffic Spikes and Abuse Resistance

Not all traffic surges are healthy.

Some spikes result from automated abuse, coordinated saturation attempts or large-scale distributed attacks. The technical mechanisms behind these events are explained in the definition of a denial-of-service attack, which outlines how excessive requests are used to exhaust system resources and disrupt availability.

When malicious traffic overwhelms bandwidth or backend resources, service stability is directly threatened. Simply scaling servers is not sufficient if hostile flows reach the origin unchecked.

Infrastructure-level filtering and advanced DDoS protection can mitigate abnormal traffic upstream, absorbing volumetric floods and limiting the impact of application-layer saturation before it degrades core systems.

The objective is not to eliminate traffic volatility entirely. It is to ensure that legitimate users remain unaffected, even when hostile conditions increase pressure on the infrastructure.

Contact

For editorial inquiries, collaboration opportunities or professional discussions related to high availability and performance engineering, you may contact us through our form. Study Technologies is an informational resource focused on infrastructure resilience, scalability and traffic stability in modern web environments.